Best Practices for Table-Driven Testing in Golang

Table-driven tests are idiomatic Go for a reason: they make repetitive test cases cheap to write and easy to extend. But they are also easy to abuse. Plenty of Go test suites have giant tables nobody wants to read, magical helpers nobody trusts, and benchmarks copied from blog posts that do not measure anything useful.

This article is about using table-driven tests where they help, and not forcing them where they do not.

Use table-driven tests for variation, not for everything

A table is a good fit when the setup is mostly the same and the interesting part is how inputs vary.

Good candidates:

- parsers

- validators

- string and path normalization

- permission checks

- HTTP request decoding

- business rules with many edge cases

A table is usually a bad fit when each case needs very different setup, mocks, or timing behavior. In those situations, separate test functions are often clearer.

A quick rule:

- if the loop removes duplication and improves comparison, use a table

- if the loop hides the test story, do not

Start with a small, explicit case struct

Keep the case type close to the test unless it is shared intentionally.

func SplitHostPort(addr string) (host, port string, err error) {

host, port, err = net.SplitHostPort(addr)

if err != nil {

return "", "", err

}

return host, port, nil

}

func TestSplitHostPort(t *testing.T) {

t.Parallel()

cases := []struct {

name string

addr string

wantHost string

wantPort string

wantErr bool

}{

{

name: "ipv4",

addr: "127.0.0.1:8080",

wantHost: "127.0.0.1",

wantPort: "8080",

},

{

name: "hostname",

addr: "example.com:443",

wantHost: "example.com",

wantPort: "443",

},

{

name: "missing port",

addr: "example.com",

wantErr: true,

},

}

for _, tc := range cases {

tc := tc

t.Run(tc.name, func(t *testing.T) {

gotHost, gotPort, err := SplitHostPort(tc.addr)

if tc.wantErr {

if err == nil {

t.Fatalf("expected error, got nil")

}

return

}

if err != nil {

t.Fatalf("unexpected error: %v", err)

}

if gotHost != tc.wantHost || gotPort != tc.wantPort {

t.Fatalf("got (%q, %q), want (%q, %q)", gotHost, gotPort, tc.wantHost, tc.wantPort)

}

})

}

}A few things matter here:

- each case has a clear

name - the table holds data, not control flow

- the assertions stay local to the test

- the loop rebinds

tcbeforet.Run

That last line avoids the classic loop variable capture bug. Go has improved loop semantics over time, but rebinding the case in tests is still a cheap habit and keeps intent obvious.

Name cases like bugs and behaviors

Case names should help when a failure shows up in CI at 2 a.m.

Bad:

case1test2edgenormal

Better:

missing portempty header is rejectedtrims trailing slashadmin can read suspended account

Good case names usually describe behavior or the specific edge condition being checked.

Keep tables readable

The fastest way to ruin table-driven tests is to cram too much into each row.

A bad table often has:

- ten input fields

- three mock expectations

- five output fields

- custom flags to change helper behavior

- half the logic hidden in setup functions

When that happens, split the table by concern.

Instead of this:

- one

TestUserServicetable with 25 fields

Prefer this:

TestNormalizeEmailTestCreateUserValidationTestCreateUserConflictTestCreateUserAuditFields

Shorter tables tell a clearer story and fail more usefully.

Prefer standard library assertions first

Go’s standard library is enough for a lot of tests:

if got != want { ... }errors.Iserrors.Asreflect.DeepEqualfor simple cases, used carefully

You do not need testify in every package just to compare integers.

For richer comparisons, especially nested structs, slices, or diffs, cmp.Diff from github.com/google/go-cmp/cmp is often a better upgrade than a broad assertion framework.

Example:

func TestNormalizeLabels(t *testing.T) {

cases := []struct {

name string

in []string

want []string

}{

{

name: "trim, lower, dedupe",

in: []string{" Ops ", "ops", "SRE"},

want: []string{"ops", "sre"},

},

}

for _, tc := range cases {

tc := tc

t.Run(tc.name, func(t *testing.T) {

got := NormalizeLabels(tc.in)

if diff := cmp.Diff(tc.want, got); diff != "" {

t.Fatalf("NormalizeLabels() mismatch (-want +got):\n%s", diff)

}

})

}

}Use cmp when the diff meaningfully improves failure output. Do not introduce it just because it looks more sophisticated.

Check errors like Go code, not like stringly typed scripts

Error assertions should usually answer one of these questions:

- did an error happen?

- is it a specific sentinel or wrapped error?

- does it contain structured context?

Example:

var ErrDivisionByZero = errors.New("division by zero")

func Divide(a, b int) (int, error) {

if b == 0 {

return 0, ErrDivisionByZero

}

return a / b, nil

}

func TestDivide(t *testing.T) {

cases := []struct {

name string

a int

b int

want int

wantErr error

}{

{name: "positive", a: 10, b: 2, want: 5},

{name: "negative", a: -10, b: 2, want: -5},

{name: "zero divisor", a: 10, b: 0, wantErr: ErrDivisionByZero},

}

for _, tc := range cases {

tc := tc

t.Run(tc.name, func(t *testing.T) {

got, err := Divide(tc.a, tc.b)

if tc.wantErr != nil {

if !errors.Is(err, tc.wantErr) {

t.Fatalf("expected error %v, got %v", tc.wantErr, err)

}

return

}

if err != nil {

t.Fatalf("unexpected error: %v", err)

}

if got != tc.want {

t.Fatalf("got %d, want %d", got, tc.want)

}

})

}

}Do not compare err.Error() unless the exact message is part of the contract.

Use helper functions, but keep them honest

Helpers are useful when they remove noise. They are harmful when they hide the entire test.

Good helper:

func mustReadFile(t *testing.T, path string) []byte {

t.Helper()

b, err := os.ReadFile(path)

if err != nil {

t.Fatalf("read %s: %v", path, err)

}

return b

}Bad helper:

- creates half the world

- contains assertions unrelated to its name

- mutates shared state

- swallows errors and returns defaults

A simple rule: the reader should still understand the test without opening three other files.

Golden files are good for stable, reviewable output

Golden files work well when the output is large enough that inline expected strings are noisy:

- rendered config

- JSON output

- generated SQL

- formatted markdown

- CLI output

Example pattern:

func TestRenderConfig(t *testing.T) {

input := Config{

AppName: "billing",

Port: 8080,

}

got, err := RenderConfig(input)

if err != nil {

t.Fatalf("RenderConfig(): %v", err)

}

want := mustReadFile(t, "testdata/render_config.golden")

if diff := cmp.Diff(string(want), got); diff != "" {

t.Fatalf("RenderConfig() mismatch (-want +got):\n%s", diff)

}

}A few rules make golden files less fragile:

- keep them under

testdata/ - only use them for outputs humans can review

- avoid unstable fields like timestamps, random IDs, absolute paths

- if output has nondeterministic ordering, sort it before comparison

Do not use golden files to avoid thinking about assertions.

Fuzz tests complement tables; they do not replace them

Table-driven tests are good for known examples. Fuzz tests are good for finding weird examples you did not think of.

If you have parser, decoder, or normalization code, fuzzing is often worth adding.

Example:

func FuzzSplitHostPort(f *testing.F) {

seeds := []string{

"127.0.0.1:80",

"example.com:443",

"[::1]:8080",

}

for _, s := range seeds {

f.Add(s)

}

f.Fuzz(func(t *testing.T, addr string) {

host, port, err := SplitHostPort(addr)

if err != nil {

return

}

if host == "" {

t.Fatalf("host should not be empty for valid address %q", addr)

}

if port == "" {

t.Fatalf("port should not be empty for valid address %q", addr)

}

})

}Start fuzzing from seed cases you already trust. That keeps the relationship between example-based tests and fuzz tests tight.

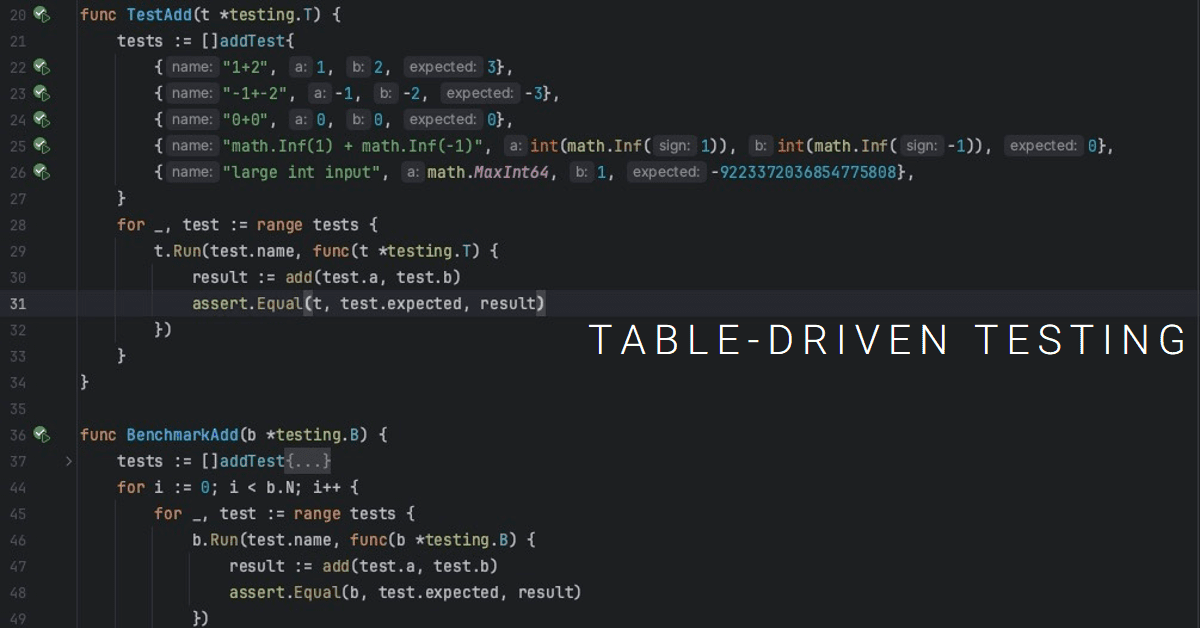

Benchmarks should measure one thing clearly

A lot of benchmark examples online are wrong or at least muddy.

Common mistakes:

- asserting inside the benchmark hot path

- nesting

b.Runinside the mainfor i := 0; i < b.N; i++ - doing expensive setup on every iteration unless that setup is part of what you want to measure

- forgetting

b.ResetTimer()when setup is expensive - benchmarking tiny toy cases that tell you nothing about production behavior

A better pattern:

func BenchmarkNormalizeLabels(b *testing.B) {

input := []string{" Ops ", "ops", "SRE", "Platform", "platform"}

b.ReportAllocs()

for i := 0; i < b.N; i++ {

_ = NormalizeLabels(input)

}

}If you want variants, use sub-benchmarks:

func BenchmarkParseID(b *testing.B) {

cases := []struct {

name string

input string

}{

{name: "short", input: "usr_123"},

{name: "long", input: "usr_12345678901234567890"},

}

for _, tc := range cases {

tc := tc

b.Run(tc.name, func(b *testing.B) {

b.ReportAllocs()

for i := 0; i < b.N; i++ {

_, _ = ParseID(tc.input)

}

})

}

}This measures code, not assertion style.

Parallel tests are useful, but shared state will punish you

t.Parallel() is a good tool for speeding up independent tests. It is also a good way to expose hidden test coupling.

Use parallelism when the test:

- does not mutate process-wide globals

- does not depend on wall-clock ordering

- does not share temp files, ports, env vars, or singleton state carelessly

If subtests are parallelized, rebind the loop variable and keep case data immutable.

Example:

for _, tc := range cases {

tc := tc

t.Run(tc.name, func(t *testing.T) {

t.Parallel()

got := CanonicalizePath(tc.input)

if got != tc.want {

t.Fatalf("got %q, want %q", got, tc.want)

}

})

}If a test needs t.Setenv, remember it changes process environment for the duration of that test. Parallel tests and environment mutation can interact badly.

Keep fixtures small and believable

Test data should resemble real usage enough to catch real bugs, but not so bloated that nobody can reason about it.

Bad fixtures:

- giant copied production payloads with hundreds of irrelevant fields

- JSON blobs nobody understands

- mocks returning impossible states

- stale examples that no longer match current business rules

Better fixtures:

- minimal but realistic

- named after the behavior they exercise

- close to the test unless reused intentionally

- updated when the domain rules change

If your fixture for “active user” still lacks fields that production requires, the test suite is lying to you.

Do not hide business meaning inside giant generic tables

Go generics can help build reusable test utilities, but generic TestCase[P, W] types are not automatically a win.

Sometimes a dedicated case struct is clearer:

cases := []struct {

name string

role string

resource string

action string

wantAllow bool

}{

// ...

}That is often more readable than abstract Params and Want fields, especially for domain tests.

Use generics when they remove real duplication across packages. Do not use them to make ordinary tests look more “advanced”.

A useful shape for HTTP handler tests

Table-driven tests are especially handy for HTTP handlers when request construction is similar but inputs vary.

Example:

func TestCreateUserHandler(t *testing.T) {

cases := []struct {

name string

body string

wantStatus int

}{

{

name: "valid request",

body: `{"email":"[email protected]","name":"Dev"}`,

wantStatus: http.StatusCreated,

},

{

name: "invalid email",

body: `{"email":"not-an-email","name":"Dev"}`,

wantStatus: http.StatusUnprocessableEntity,

},

{

name: "malformed json",

body: `{"email":"[email protected]"`,

wantStatus: http.StatusBadRequest,

},

}

for _, tc := range cases {

tc := tc

t.Run(tc.name, func(t *testing.T) {

req := httptest.NewRequest(http.MethodPost, "/users", strings.NewReader(tc.body))

req.Header.Set("Content-Type", "application/json")

rr := httptest.NewRecorder()

handler := http.HandlerFunc(CreateUserHandler)

handler.ServeHTTP(rr, req)

if rr.Code != tc.wantStatus {

t.Fatalf("status = %d, want %d, body = %s", rr.Code, tc.wantStatus, rr.Body.String())

}

})

}

}This keeps the variation in the table and the request mechanics in one obvious place.

A short checklist

When table-driven tests are working well, you can usually say yes to most of these:

- does the table reduce duplication instead of hiding intent?

- are case names useful in CI output?

- is the table small enough to read in one pass?

- are assertions local and obvious?

- are loop variables rebound before subtests?

- are errors checked with

errors.Isorerrors.Aswhen appropriate? - are helpers small and honest?

- are golden files used only for outputs worth reviewing?

- are fuzz tests covering parser-like code where randomness helps?

- are benchmarks measuring something real?

Table-driven tests are a tool, not a style badge. Use them where repeated structure exists. Stop when the table becomes harder to understand than the code it is testing.